In the future, those with substantial hearing loss may no longer need a doctor to surgically implant a cochlear device into their ear to restore their sense of sound.

If researchers at Colorado State University are successful, they may just pop a retainer into their mouths. The team of engineers and neuroscientists are developing a hearing device that bypasses the ear altogether and puts words in the mouth.

The technology relies on a Bluetooth-enabled earpiece to detect sound and send electrical impulses to an electrode-packed retainer that wearers press their tongue against to “hear.”

“It’s much simpler than undergoing surgery and we think it will be a lot less expensive than cochlear implants,” said John Williams, associate professor in the Department of Mechanical Engineering and the project lead.

Finding his tongue

Williams first conceived the idea for the device during what he calls a “research midlife crisis.”

The mechanical engineer has spent much of his career designing and building electric-propulsion systems for space travel. Though he loves the work and still conducts research in that area, Williams says many of the challenges have been overcome.

“NASA now uses electric propulsion in space,” he said. “There is more work to be done to optimize the technology, but we’ve accomplished what we set out to do.”

Williams wanted to expand his research and became interested in neuroscience and sensory substitution — training the brain to receive information from another source. (American Sign Language and Braille are both examples of sensory substitutes.)

Sign Language and Braille are both examples of sensory substitutes.)

Around the same time, Williams developed tinnitus, a constant, high-pitched ringing in his ears. Years of working around powerful vacuums used to simulate space zapped his ability to hear high frequencies.

That diagnosis led him to research cochlear implants and the pros and cons of the devices.

Williams considered all of the information and eventually hit upon his new research project: hearing with the tongue.

The tongue contains thousands of nerves and the region of the brain that interprets touch sensations from the tongue is capable of decoding complicated information.

“What we are trying to do is another form of sensory substitution,” Williams said.

Hearing with your tongue

Unlike hearing aids, which amplify sound, cochlear implants circumvent damaged areas of the ear and stimulate the auditory nerve directly.

Microphones outside the ear detect sounds and send t hem to a speech processor, which analyzes the information and transmits it to a receiver where it is converted into electric impulses. The implant sends those impulses directly to the auditory nerve. With training, the brain learns to recognize these impulses as useful sound information.

hem to a speech processor, which analyzes the information and transmits it to a receiver where it is converted into electric impulses. The implant sends those impulses directly to the auditory nerve. With training, the brain learns to recognize these impulses as useful sound information.

The CSU device operates very similarly except electric impulses are sent via Bluetooth to a retainer-like mouthpiece packed with electrodes. When users press their tongue against the device, they feel a distinct pattern of electric impulses as a tingling or vibrating sensation.

The idea is that, with training, the brain will learn to interpret specific patterns as words, thus allowing someone to “hear” with their tongue.

The concept is not as far fetched as it first sounds.

Phoneticists can identify specific words from the series of black lines on a sonogram. And people who lose their sight can learn to “read” words again with Braille.

The notion that the human brain is “set” by adulthood and therefore, unable to change how it receives and interprets information is inaccurate, said Leslie Stone-Roy, assistant professor in the College of Veterinary Medicine and Biomedical Sciences.

“We have a remarkable amount of plasticity in our brain even as adults,” she said. “We now know that is able to make changes and adapt to changes in incoming information, especially stimuli that are of importance to the individual.”

Mapping the tongue

Williams and JJ Moritz, a CSU graduate student, have spent the past year building and testing prototypes of the technology. Their initial results are promising enough that they have filed a provisional patent for the technology and launched Sapien LLC, a start-up company, to help advance the technology.

But there is still much work to be done to refine and improve the hearing-with-the-tongue technology. The electrode-dense mouthpiece is a good example.

As engineers, Williams and Moritz understand how to pack electrodes into a small space, but not necessarily where they should be positioned on the mouthpiece so the tongue can pick up the strongest patterns.

That’s why they asked Stone-Roy, a neuroscientist who studies taste receptors on the tongue, to join the project.

She is helping Moritz and Williams determine which parts of the tongue detect electrical impulses and if those areas are consistent from person to person. They have launched a new study in which participants place an array of electrodes in their mouth and report where they feel electrical impulses and how strong they are.

“Basically, we are mapping the nerves on the tongue,” Stone-Roy said. “There isn’t a lot of information out there about the nerves on the tongue and their ability to sense electrical impulses.”

The results are important. If nerve patterns are consistent, the mouthpiece can be standardized with electrodes placed in key receptor areas that everyone has.

If not, it will likely have to be customized to each wearer, or to specific sub-populations of users, which will affect the cost of the technology.

Why a new device?

Williams and his team believe that, once refined, their technology could turn the world of hearing devices on its ear.

Although cochlear implants are considered the most successful medical prosthesis in the world, they are far from perfect.

Doctors insert the devices into the ear structure near t he auditory nerve. The surgical procedure has inherent risks and can cause additional damage to the sensory cells in the inner ear that transmit sound to the auditory nerve.

he auditory nerve. The surgical procedure has inherent risks and can cause additional damage to the sensory cells in the inner ear that transmit sound to the auditory nerve.

Cochlear implants aren’t for everyone. They tend to work better on younger patients such as infants with some hearing loss. Candidates must have most of their auditory system intact for the implants to work.

And even 30 years after the U.S. Food and Drug Administration approved their use, the devices are still expensive. In the U.S., patients pay at least $100,000 for the pre-screening, implants, surgery and follow-up therapy.

“Cochlear implants are very effective and have transformed many lives, but not everyone is a candidate,” Williams said. “We think our device will be just as effective but will work for many more people and cost less.”

Read more stories about research at CSU.

Neuroscientist speaks in tongues

By Kristen Browning-Blas

Talk to Dr. Leslie Stone-Roy about her study of taste buds, and you will be very aware of your own tongue.

We talk with it, taste with it, stick it out, and bite it, both figuratively and literally. And for many of us, that’s the only time we really notice the muscular, nerve-filled organ.

Yet studying how the tongue “talks” to the nervous system has been a life’s work for Stone-Roy, assistant professor in Colorado State’s Department of Biomedical Sciences. She has spent 20 years researching how we interpret taste.

So when John Williams, CSU associate professor of mechanical engineering, needed an expert in the neuroanatomy of the tongue to help develop his hearing device prototype, he just strolled across campus to Stone-Roy’s lab in the Anatomy Building.

“I’ve looked at the embryology of taste buds. I’ve looked at cell transduction – how taste cells take the information from sweet food, for example, and transmit that into a signal that the nervous system can decode. I’ve also worked on how taste cells talk to the nervous system – what kinds of neurotransmitters they release,” Stone-Roy explained. “In the process of studying all that, I have looked at a lot of tongues.”

Inside those tongues are various nerves that carry information about taste, touch, pain and movement.

The nerves carrying touch information from the tongue to the central nervous system – the spinal cord and brain – are very similar to those that carry information from the skin surface of the body to the central nervous system.

Understanding how they work could lead to new electronic devices, such as tongue-controlled wheelchairs and Williams’ hearing device.

On the taste front, Stone-Roy is studying overweight mice to determine if their taste cells are different from those in normal mice. While much obesity research focuses on the hypothalamus, which regulates hormone production, understanding the role of taste cells in the desire to eat might lead to different types of obesity treatment, Stone-Roy said.

Along with tongue-mapping and taste research, Stone-Roy teaches undergraduate and graduate-level courses in neuroscience, including the new Introduction to Systems Neurobiology, part of CSU’s bachelor of neuroscience introduced in fall 2014. As a charter member of the neuroscience honor society, Nu Rho Psi, she is looking forward to nominating students for the new CSU chapter.

She also volunteers in the local schools, judging elementary science fairs and mentoring high school students. Stone-Roy is passionate about Brain Awareness Week, an outreach program that trains 70 CSU students to present interactive stations on neurobiology, sensory systems and brain anatomy in high schools.

“I love teaching and I love outreach, it keeps me out of trouble,” said Stone-Roy – with tongue in cheek.

Media Assets

Video

High-Resolution Photos

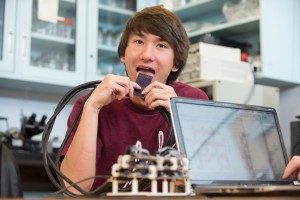

Dr. John Williams, a professor of mechanical engineering; Dr. Leslie Stone-Roy, a professor of neuroscience; and JJ Moritz, a graduate student, are developing a device to hear with your tongue.

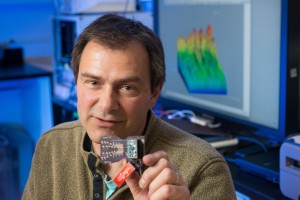

Dr. John Williams, who is leading the project, envisions the device as being a non-surgical alternative to cochlear implants.

Dr. John Williams, who is leading the project, envisions the device as being a non-surgical alternative to cochlear implants.

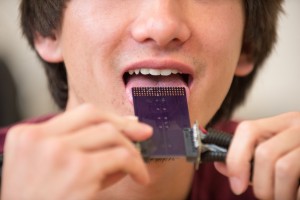

CSU researchers are testing the nerves on the tongue to see which are best at detecting the electrical impulses necessary for the device to work.

CSU researchers are testing the nerves on the tongue to see which are best at detecting the electrical impulses necessary for the device to work.